AI as a Tibetan Horse

AI is a sufficiently powerful weapon that government controls are unavoidable. But what kind?

To build a state-of-the-art military, China’s Song dynasty needed tens of thousands of mounted warriors. But Chinese horses were famously weak. They could pull a cart or a plow, but lacked the stamina, bone density, and explosive power of a true warhorse. (Modern researchers figured out that most Chinese soil contains low levels of selenium, a trace mineral critical for muscular development. Selenium-deficient horses are softer and more fragile.)

My Kingdom for a Horse

The emperor understood nothing about selenium, but he was obsessed with horses bred in the mineral-rich, high-altitude soils of the Mongolian and Tibetan plateaus. These animals developed the dense muscle mass required for long-range, high-intensity cavalry warfare. The Song emperor needed as many of these horses as he could get to defend Han China.

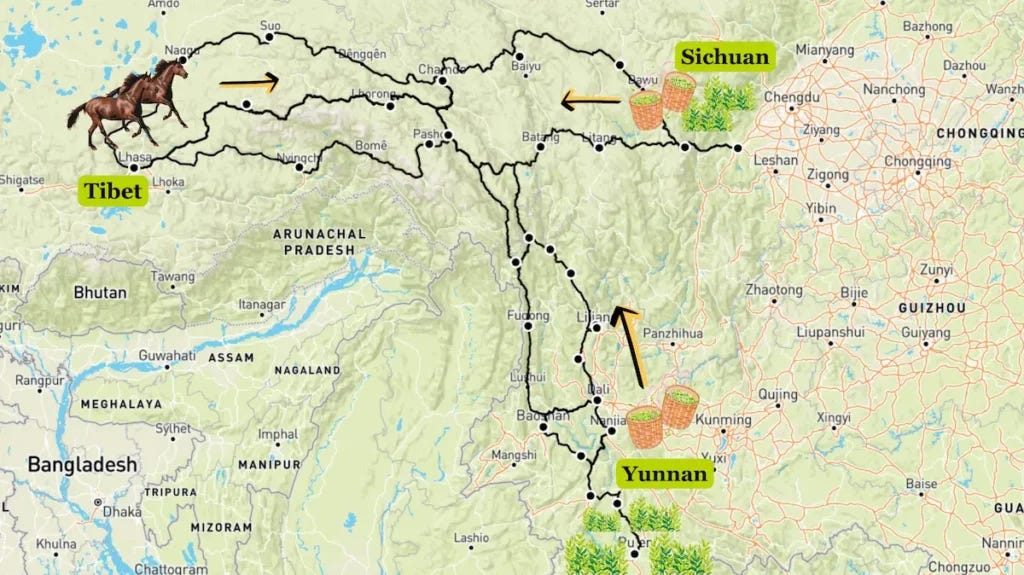

Fortunately for the emperor, the tribes scattered throughout the Tibetan highlands were happy to exchange horses for tea. Tea helped Tibetans break down fats in a diet rich in yak butter and meat. It also provided vitamins in a high-altitude environment where greens were scarce. Soon, Tibetan tribes were exchanging tons of tea for thousands of horses along one of history’s most enduring trading routes — the ancient Tea Horse Road between Tibet and Yunnan or Sichuan.1

Tibetan horses were essential military assets, so emperors moved to control them and the tea used to buy them. In 1074, the Song court established the Chamasi — the Tea and Horse Office — to monopolize the tea and horse trade, pacify Tibetan leaders, and prevent rival warlords from acquiring militarily capable horses.

It didn’t work. In 1215, Genghis Khan’s armies, carrying four or five horses per soldier, swept into northern China. His grandson, Kublai, completed the conquest in 1279, overthrowing the Southern Song and creating an empire four times the size of Rome’s.

Modern emperors still obsess over any technology that confers a clear military advantage and can proliferate to adversaries. They try to control these technologies by mixing four approaches.

Own it. States can seize technologies by nationalizing them. Starting with the Manhattan Project, the US nationalized the production of nuclear weapons. The Atomic Energy Act made the federal government the sole legal owner of fissionable material and granted it a monopoly over nuclear weapons technology. The Second Amendment’s protection of the right to bear arms does not extend to nukes — or to bazookas, for that matter.

Similarly, the US Navy effectively took over the American radio industry during World War I. It helped engineer the creation of RCA in 1919, partly to keep wireless technology in American hands. A generation later, radar development was again highly classified and coordinated through MIT’s Radiation Laboratory.

Regulate it. States can heavily regulate sensitive technology. Cryptography was once considered so central to national security that the NSA classified strong encryption as a munition under the International Traffic in Arms Regulations (ITAR). When Phil Zimmermann released the PGP encryption protocol in 1991, the federal government opened a criminal investigation, accusing him of ‘munitions export without a license.’ MIT Press then published Zimmermann’s source code as a printed book, which the First Amendment unambiguously protected. By 1996, the government dropped the case. The proliferation had outrun the regulation, and the First Amendment workaround had made enforcement absurd.

Restrict exports. States can lightly regulate a sensitive technology while taking measures to keep it out of adversaries’ hands. The US used ITAR to tightly control satellite and space technology for decades. Communications satellites sat on the US Munitions List until 2014, significantly suppressing the growth of the commercial space industry. Until 2000, the government deliberately degraded civilian GPS signals to limit their military capabilities or use overseas.

Capture funding. Governments can shape technologies by collapsing the addressable market for private capital. ITAR made satellite and space hardware effectively non-exportable, so for many decades, federal procurement became the only viable customer. The state doesn’t ban private investment so much as strangle the market that would justify it.

Recent Events Show That AI Will Soon Be Regulated

Modern governments are now obsessed with AI for the same reasons Chinese emperors were obsessed with warhorses: under some circumstances, it may become a decisive military technology.

Military potential. The technology has serious dual-use applications across cyber operations, bioweapons, autonomous systems, and mass disinformation.

Concentration. Frontier AI may seem diffuse, like horses on a plain, but a small number of developers control it, and their compute requirements create natural chokepoints.

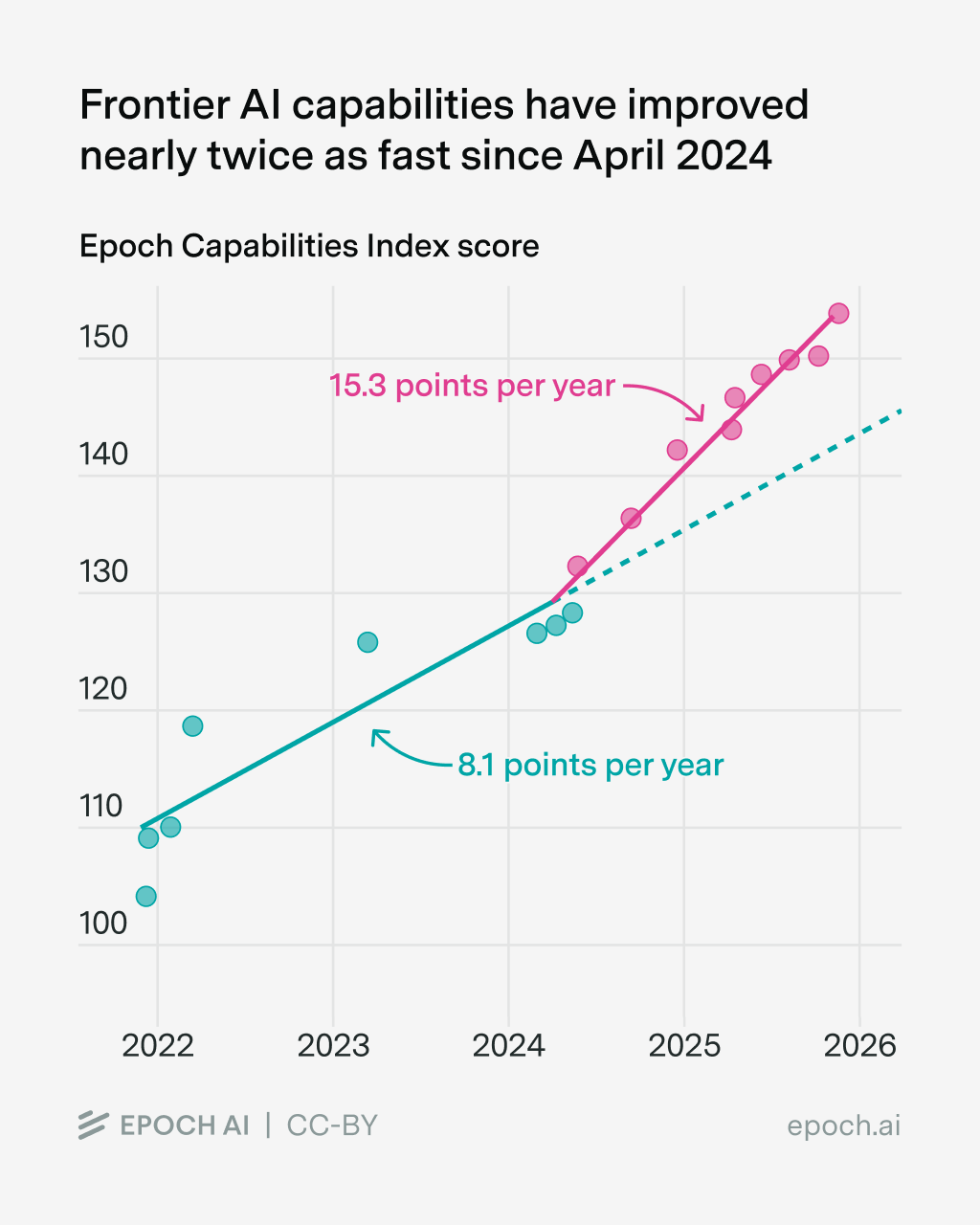

Rate of improvement. AI is improving so quickly that policymakers feel pressure to act before the technology outruns their ability to respond.

By several measures, frontier AI is getting stronger faster. This month, Anthropic announced its most advanced model, Mythos, and simultaneously declined to release it publicly after determining that it could autonomously discover and exploit security vulnerabilities in every widely used operating system, browser, and major piece of infrastructure software. The UK Security Institute, given early access to Mythos, found that it succeeded at expert-level hacking tasks 73 percent of the time — a benchmark that every prior model had scored zero on. Instead of a public release, Anthropic has shared the model with a small set of infrastructure defenders through a project it called Glasswing.

An awkward dance inside the Trump administration followed. In February, the Pentagon had labeled Anthropic a ‘supply chain risk’ because the company refused to allow the military to use its models for autonomous weapons or domestic mass surveillance. Anthropic is in active litigation over the designation.

Then Mythos arrived. Within days, reports surfaced that the NSA was using it. Treasury and Federal Reserve officials began red-teaming Mythos after warning the largest US banks that AI-enabled attacks had become an existential risk to the financial system. The White House Chief of Staff and the Treasury Secretary met directly with Anthropic’s CEO. Pete Hegseth’s Pentagon, having declared Anthropic a national security threat, watched the rest of the government race to access the very capability he was trying to ban. Plainly, the US government needed Anthropic more than Anthropic needed the US government.

Technology that powerful will not roam free. The federal government has already put some early AI controls in place.

Export controls. The Bureau of Industry and Security handles export controls and has floated rules on model-weight exports. Restrictions on advanced chips and semiconductor manufacturing equipment to China (October 2022, expanded in 2023) are essentially an attempt to control AI capabilities one layer down the stack.

Reporting on dual use. A 2023 Biden Executive Order required reporting for models trained above certain compute thresholds, along with red-teaming results for dual-use capabilities. The Trump administration rescinded the order in January 2025. Bet on them bringing it back, lightly repackaged.

Pre-deployment testing. The US and UK Safety Institutes (the latter renamed the AI Security Institute) have gained access to pre-deployment testing of frontier models, including Mythos.

The Modern Chamasi: A 21st Century Control Regime

Which tools will the US government deploy to police the military frontier of AI? Full nationalization seems unlikely. Unlike nuclear weapons, AI is genuinely dual-use, inherently diffuse, and backed by an industry with far more lobbying muscle than the physicists of 1945. Instead of a single Manhattan Project–style act, what is emerging looks more like a multi-layer defense.

Export controls. BIS — our modern Chamasi — is already restricting the ‘tea’ of the AI world: high-end chips and semiconductor manufacturing equipment. Noah Smith has an excellent breakdown of the current debates about how well export controls to China are working.

Pre-deployment evaluation. The voluntary Project Glasswing model, where Anthropic shares Mythos with infrastructure defenders, is a temporary truce. Expect executive orders to eventually mandate this kind of federal preview, turning voluntary cooperation into a federal license to operate.

Targeted secrecy. Rather than classifying entire models, the federal government is moving toward capability-specific control—classifying specific bioweapon synthesis, AI targeting, or cyber offensive outputs while leaving general commercial models available. Whether critical military capabilities can be separated and controlled is the subject of an ongoing debate.

Strategic procurement. By becoming the largest customer, the government is likely to shape AI through Defense Production Act authorities and national-champion industrial policy — a pattern that mirrors the early semiconductor era.

The Politics of Preemption

AI investors and executives are taking extraordinary steps to influence how their technology is regulated. The Leading the Future PAC, which entered 2026 with roughly $70 million in cash on hand, represents an industry effort to influence which branch of government regulates them and on what terms.2

Leading the Future has targeted Alex Bores, the former data scientist turned New York State Assembly member. Bores co-sponsored the RAISE Act, a New York law that requires major AI developers to publish safety protocols and disclose serious incidents. By spending heavily against Bores in his run for Congress and intimidating others, LTF is working to block any legislative path to comprehensive federal control. Its goal is not to prevent regulation but to trade state-level safety protocols for a centralized, industry-friendly federal framework. (To me, this would argue for electing Bores to Congress and working with him to federalize the RAISE Act and preempt state regulations in the process. Unusually for an elected leader, Bores once worked at Palantir — whose co-founder Joe Lonsdale is a major funder of LTF and appears blindly determined to punish apostates.)

There is a political tension at the heart of Leading the Future. The PAC is working alongside a Trump administration that preaches deregulation while practicing centralization. Trump wants to put AI policy under executive control rather than letting it settle into a bipartisan congressional framework with checks and balances. Trump prefers a Digital Executive that can invoke national security at will, without the constraints that legislation would impose. (Prediction: A16Z, Lonsdale, Ron Conway, and other LTF backers will come to regret ignoring Trump’s authoritarian political instincts.)

Moreover, the delicate dance between Silicon Valley and the feds depends on AI improving gradually enough to allow for the learning that comes with gradual regulation. But the technology is no longer cooperating, so Leading the Future may end up leading the past. Anthropic’s own leadership has suggested that open-source labs and Chinese developers could replicate Mythos-level hacking threats within 6 to 12 months.

If a Mythos-class capability leaks or if China uses an AI model to strike critical US infrastructure, a $70 million PAC will become irrelevant overnight. In the face of a concentrated catastrophe, the political economy will shift instantly from partnership to seizure.

The Emperor’s Dilemma

The Song emperors eventually learned a hard lesson: a state can monopolize the tea, build the Chamasi offices, and regulate trade routes, but it cannot change the geography of the plateau. The power of Tibetan and Mongolian horses grew from the soil itself — a natural advantage that eventually enabled Genghis Khan and his successors to crush or bypass the Song bureaucracy and redraw the map of the world.

Today’s emperor in Washington faces the same structural reality. He wants to build a regulatory fence around a technology that, like the Tibetan warhorse, derives its power from an environment — global compute, open research, distributed talent — that no single state can own or permanently dominate.

The US may temporarily control a 21st-century Tea Horse Road. But the Mythos moment proves that once the warhorse exists, the advantage shifts to those willing to ride. The government may not need to nationalize AI to control it. But if it cannot control critical AI risks, the federal government will face the fate of the Song: seeing a superior force charge the palace gates using the very technology it was trying to gatekeep.

ICYMI

Meta is building a virtual Mark Zuckerberg to interact with employees. If he listens better than the actual Mark, can he become CEO?

A plastic wrap that kills 94% of virus particles within an hour. Valuable!

China is launching a massive war on Alzheimer’s.

Every major US city is losing immigrants — an incredible own goal.

NASA discovers 10,000 new exoplanets. What?

Disapproval of Congress hits a record high of 86%.

Betting site Kalshi suspends three Congressmen for placing tiny bets on their own reelection. Betting on an event that you control (like whether you’ll run) is obviously problematic. But why is betting you’ll win a problem?

But, you ask, why didn’t Tibetan horses succumb to a selenium-deficient diet when they relocated to the central and northern plains of China? Historical records and archaeological traces suggest that the Song military began feeding their newly acquired Tibetan horses tea dregs (the leftover leaves) or low-quality tea cakes mixed with fodder. Unlike native horses raised on local grass, Tibetan horses arrived with high baseline stores of selenium in their livers from the mineral-rich soils of the plateau. The addition of tea to their diet provided a continuous “maintenance dose” of selenium that native horses never received. So ironically, the Song Dynasty was exporting the very “medicine” needed to keep their imported horses alive.

Leading the Future is heavily funded by venture capital firm Andreessen Horowitz (a16z), OpenAI President Greg Brockman, and Palantir co-founder Joe Lonsdale.